基于ICE40 UP5K的视觉FPGA 模块上系统

收藏

分享

脑图

基于ICE40 UP5K的视觉FPGA 模块上系统

An FPGA-based SoM with integrated vision, audio, and motion-sensing capability

项目介绍

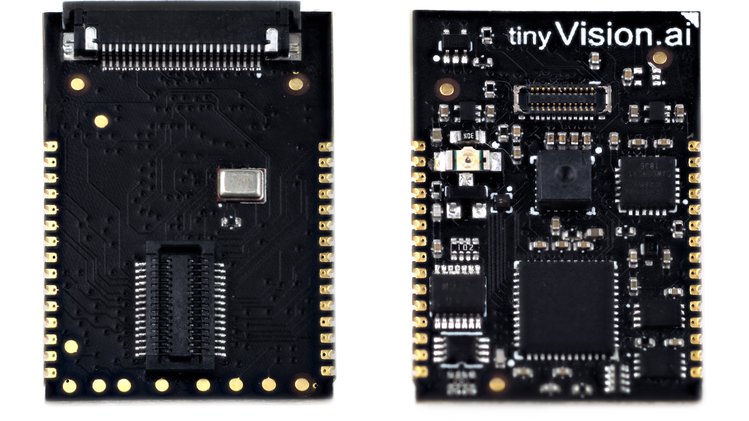

The Vision FPGA SoM is an iCE40 5K FPGA based System on Module that integrates a low power qVGA vision sensor, 3 axis accelerometer/Gyroscope and an I2S MEMS microphone in a small form factor (3 cm x 2 cm).

Low Power Image Sensor + FPGA: Overall power consumption will be minimized and in the 10-20mW range to enable battery powered applications.

Well-designed Host Interface: Easy to integrate into a larger system using an interrupt driven SPI host interface, programmable IO voltages to support a glueless HW interface.

Modular Design: Complexity will be localized to the SoM so that the developer can quickly integrate this device into their system using a breadboard as well as a simple API. All required components for vision/audio/motion (eg. image sensor and illumination) will be integrated so the developer doesn't have to cobble together parts. The solution will translate into a final product with minimal changes.

Flexible and Easy to Use: The Vision FPGA SoM is made to be easy to integrate mechanically and electrically. The SoM will appear like an SPI device with a SW library to support it. You will have the ability to configure the FPGA CRAM instead of having to program the Flash followed by a reset to configure the FPGA. Provide flexibility to the developer in choosing an image sensor while providing a reasonable low-cost default option.

Strong set of features: Will not be just another FPGA board clone. Require a reasonably sized SRAM to allow for temporary data storage at low power (not DRAM!) which is especially important for vision applications where multiple image frames may need to be captured/processed. Audio and IMU are commonly used together with vision and will make sense to integrate on this platform.

Documented and Open FPGA code: Ideally use an open source toolchain. Well-documented code and plenty of simple examples for each subsystem as well as a large design that ties together various parts of the SoM.

应用领域

Image/Sound/Motion capture

Trigger on scene change/sound/motion to capture video/audio

Capture IMU readings with images for VR/AR applications

Beamform audio with vision input to pay attention to areas with motion

Low Power Edge Processing. Preserve privacy by processing data on the SoM

Enable developers to detect objects, keywords and gestures using a low complexity Neural Network with no cloud connectivity required ie.

技术指标

The main processing element is the Lattice iCE40UP5k FPGA

8 MAC units

1 Mb RAM

5K LUT's

Image sensor options:

On-board low power qVGA monochrome global shutter imager (Pixart PAJ6100U6)

Connector for color/monochrome rolling shutter imager (Himax HMB010) Image sensor not included!

Connector for OV7670 flex Image sensor not included!

One Knowles MEMS I2S microphone, expandable to a stereo configuration.

Invensense IMU 60289 6-axis Gyro/accelerometer

Memory:

4 Mb qSPI Flash for bitstream/code storage

64 Mb qSPI SRAM for temporary data

LED’s:

Tri-colour LED for a user interface driven by the FPGA

IR LED for low light illumination with frame exposure synchronization

Four GPIO, programmable IO voltage

4 wire SPI host interface with programmable IO voltage

Flexible power options:

Single 3.3 V operation, can supply 1.8 V and 1.2 V @100mA (max) to external devices using onboard LDO's

External 3.3 V, 1.8 V, 1.2 V for lower power operation

Supports the Lattice SensAI toolchain using Tensorflow/Caffe/Keras for model development, quantization and mapping to the SensAI Neural Network engines.

Vision based People detection

Audio keyword detection

Small size: 2cm x 3cm

开发套件

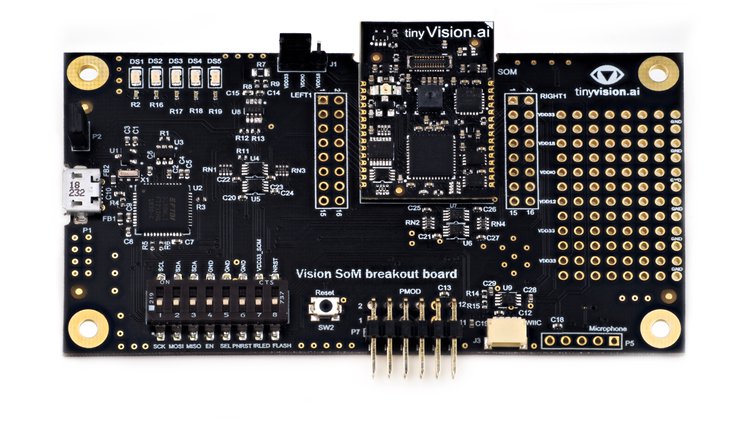

The Developer kit breaks out all pins and provides USB connectivity for programming/debug, power measurement over I2C, LED’s, additional microphone, IR LED’s for illumination, PMOD expansion header, and a small prototyping area

评论

0 / 100

查看更多

2020-05-24

3630

Copyright © 2024 苏州硬禾信息科技有限公司 All Rights Reserved 苏ICP备19040198号